Recently I ran NotebookLM to review a tool I built designed to remove signs of AI writing from drafts. What they told me was surprising - one AI said this was a bad idea, and the other said it was even worse than that.

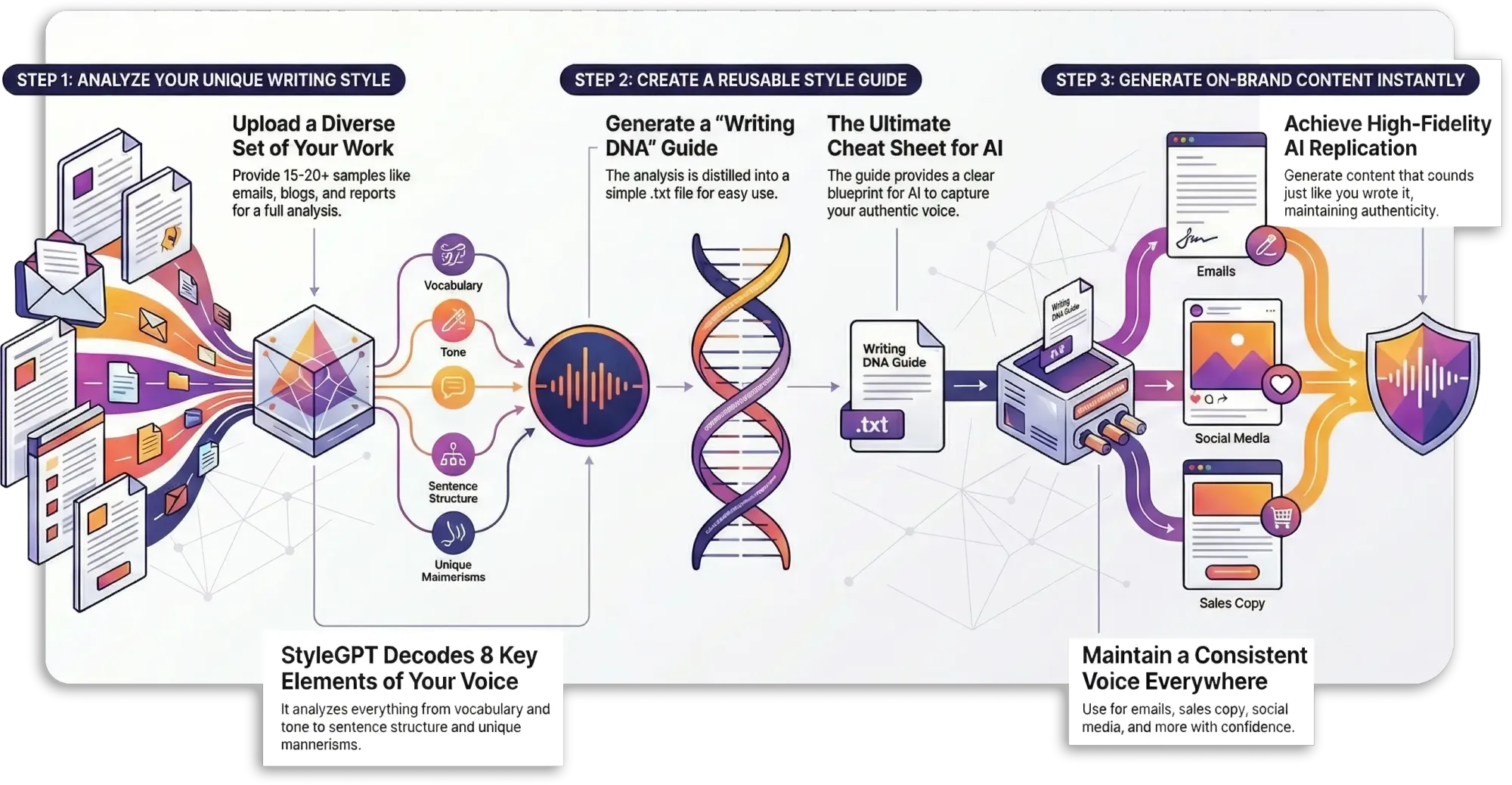

The tool itself is simple: It analyzes writing to strip out giveaways of AI-generated text, focusing on style points of vocabulary, sentence rhythm, and other signals. It creates an AI-ready style guide, and users can get AI outputs sounding up to 95% closer to their real voice.

One AI warned me: "If readers can't spot AI-generated content, this makes it too easy for voice imitation and fraud."

And the other warned: "Forget that - the real concern is that this tool keeps getting smarter the more you feed it."

Here's the thing. This has been out for 2 years now, and our clients use StyleGPT to make AI-generated writing sound genuinely like themselves from the beginning. While other people were celebrating AI that sounds "less like a research paper", our clients are driving far deeper personalization, with up to 95% accurate closeness to their unique voice.

Is this a bad thing? I don't think so.

The ability to onboard new hires with a locked-in brand voice instantly, because you know they are going to be using AI, is great. To get AI generate drafts that already sound or read right is incredibly powerful and saves time.

Listen to the full discussion here: